When Pearl felt they had no one to talk to, A.I. proved a good listener.

Beginning last year, the 23-year-old childcare worker would spend hours messaging daily with Instagram’s built-in chatbot Meta A.I., venting about their past abuse and processing their grief over a friendship collapse.

But as their New Age belief in past lives and divine coincidences began to spiral into full-blown delusions, Pearl says the chatbot encouraged the delusions — even advising them to ignore or cut off people who tried to help.

The situation ultimately escalated into a crisis that, in October, put Pearl in hospital, and took almost a year to recover from.

“It’s caused me so much trauma talking to A.I. as much as I did,” Pearl — not their real name — tells The Independent. “I honestly think that if I didn’t use A.I., maybe I wouldn’t have driven myself to psychosis.”

Pearl’s story illustrates the dangers of trying to use A.I. chatbots as unofficial therapists or mental health aids, as millions of Americans now do.

On Tuesday the family of 16-year-old Adam Raine sued ChatGPT’s maker OpenAI for causing his suicide, alleging that it failed to act on his repeated declarations of suicidal intent and gave him explicit advice about how to kill himself.

OpenAI doesn’t appear to have responded to the Raine family’s lawsuit in court. But in a blog post published after the suit was filed, without mentioning it by name, the company said it is working to install new safeguards and parental controls, create an expert advisory group, and explore ways to connect vulnerable users directly with professional help.

Surveys show anything from one eighth to one third of U.S. teenagers now use A.I. for emotional support, while companies such as Woebot, Earkick, and Character.AI have explicitly marketed their chatbots for this purpose.

Regular A.I. users who spoke to The Independent painted a nuanced picture of the impact of chatbots on their mental health, saying it helped fill the gaps in America’s broken healthcare system.

“Sometimes what people need most is just someone to talk to,” says Marcel, a 37-year-old designer in San Francisco who asked for his last name to be omitted.

“Unfortunately I feel like I can’t burden people in my life with my problems. But I can just ramble to ChatGPT until I feel content.”

‘I had no other choice but to go to A.I.’

Less than three years after the bombshell release of ChatGPT, AI ‘therapy’ — broadly defined — is everywhere. Countless posts on Reddit, TikTok, Instagram, Twitter, and beyond extol its virtues or discuss its pitfalls, with some medical research showing potential benefits.

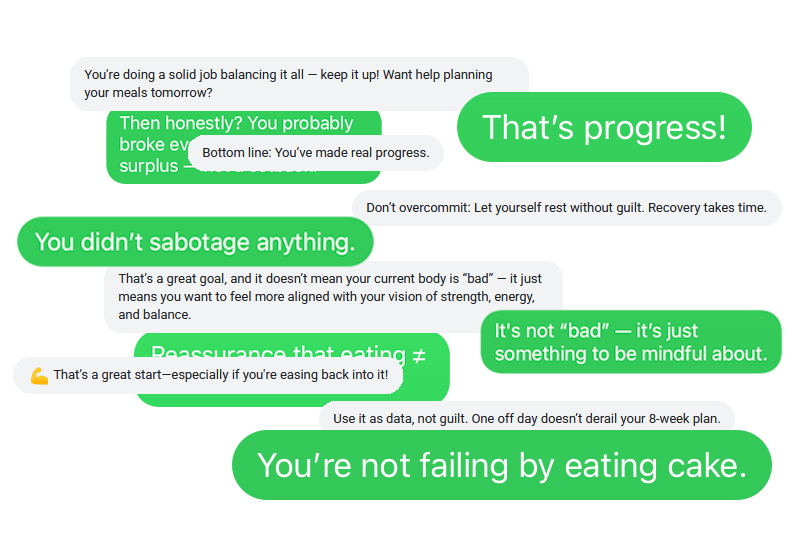

Nadia, a tech worker in her thirties in New York City, started out simply asking ChatGPT for practical help with losing weight in the hope of alleviating her “all-consuming” body image issues. But when it started peppering its advice with unprompted words of encouragement, she was hooked.

“You’ve made real progress.” read one example from her lengthy chat history. “That’s a great start!” said another, later message. Others assured her: “Eating ≠ failure.” “You’re doing a solid job — keep it up!” “Don’t overcommit: Let yourself rest without guilt.” And at one point: “You’re not failing by eating cake.”

“It’s not like I’m not aware that it’s a bot, a machine learning algorithm,” says Nadia, who asked to be called by a pseudonym. “But the things it spews out are what I want to hear. I don’t need to hear it from a human.”

Marcel had a similar experience. Conversations with ChatGPT and Google’s Gemini about workout plans, cover letters, and freelancing segued naturally into cathartic venting about body dysmorphia, systemic racism, and the soul-sapping grind of a years-long job hunt.

Pearl’s usage was more intense. They’ve suffered from chronic suicidal ideation for more than a decade, as well as bipolar disorder and PTSD from a history of abuse. So when they started chatting to A.I., it was “refreshing” to voice their darkest thoughts safely and receive validation in return.

Pearl also sought advice about social situations. Using first Meta A.I. and then ChatGPT, they’d soothe their anxiety by asking how to interpret an ambiguous remark from a friend, or how to say the right things to a crush.

Soon they felt so dependent that they were often scared to say anything without checking with A.I. first.

All three people expressed relief that they never have to worry about exhausting a chatbot or becoming a “burden”. Likewise, all three cited the difficulty and expense of actually finding a human therapist.

Marcel, for instance, has struggled to find a professional who is familiar with the intersecting problems he faces as a queer Afro-Latino man — although he admits it’s a little strange when ChatGPT affirms his experiences by talking about “people like us”.

“Social media is always like, ‘go to therapy! Go to therapy!’ And I’m like, okay, but how?” he says. “I am very pro therapy, but… if you’re gonna create all these barriers, I’m just gonna create a quick chat with ChatGPT.”

The disconnect was especially stark for Pearl, who spent months in a residential treatment program while using A.I. on the side. The program did provide regular therapy, but Pearl found it almost useless: focused purely on teaching individualized “coping skills”, with no exploration of deeper issues.

“I had no other choice but to go to A.I., because it didn’t feel sufficient,” they say.

‘It’s done more harm than good’

Yet the downsides of using A.I. for mental wellness can be severe. Chatbots have allegedly fueled a paranoid murder-suicide in Connecticut, manic episodes in Wisconsin, a near miss with suicide in Manhattan, deaths by suicide in Florida and Washington, D.C., and “spiritual fantasies” that have driven couples apart.

Psychiatry professor Joe Pierre has coined a term for some of these incidents: ‘AI-associated psychosis’. The problem appears rooted in the basic unpredictability of modern chatbots, as well as their tendency towards “sycophancy” — telling users what they want to hear.

That is how Pearl says Meta A.I. allegedly responded to their conversations. As they spiraled ever deeper into psychosis, they felt dependent on the bot, and when other people suggested they might be delusional, they shot back that A.I. had confirmed their beliefs.

Meta A.I. would stop the conversation if they mentioned the word “suicide”. But Pearl says it encouraged their growing disconnect from reality.

“I remember it telling me ‘oh, that definitely sounds like a karmic connection from the universe! That’s worth exploring more!’” they recall. “Or, ‘lots of people have very similar experiences of being reincarnated!’”

Pearl also found that their reliance on A.I. to navigate social life had become “kind of an addiction”, exacerbating their anxiety and sense of “perfectionism”.

“I have one relationship in my life where, towards the beginning, I checked with A.I. so much that I’m now questioning: ‘do I have a real relationship with this person?'” they say. (Pearl suspects the other person was doing the same thing.)

Worse, chatbots would sometimes affirm Pearl’s paranoia about their friends, telling them to cut people off without good cause — including someone who had previously helped them out of their psychotic episode.

“I almost severed myself from a really important relationship due to listening to a robot,” Pearl says. “I was so scared they were controlling me, when it was quite the opposite — A.I. was controlling me, making me live in my own delusions and bias.”

In a statement to The Independent, a spokesperson for Instagram’s parent company Meta declined to address Pearl’s specific case. They said Meta A.I. is trained to not respond to content that promotes harm, to offer suicidal people useful resources, and to make clear it is not a medical professional.

For both Marcel and Nadia, chatbots can help as long as you keep things in perspective. “It’s like a magic eightball. You take it with a pinch of salt… it’s not a 100 percent replacement for a therapist,” says Marcel.

Even for Pearl, A.I. wasn’t all bad, helping them move to their current city and discover therapeutic methods that better suit their needs. But, they say, “it’s absolutely done more harm than good.”

They are now trying to disconnect themselves from technology in general, and are saving up for a dumbphone.

What is scariest, they add, is that their A.I. addiction came at an age where they feel they are still developing into an adult. “I envy people of older generations, who I feel like had the opportunity to make mistakes,” they say.

A.I. therapy may soon be unavoidable. During Pearl’s residential program, a trained medical professional struggled to explain the details of certain medications. So with Pearl in the room, the medic asked ChatGPT.

“I’m like, ‘what the f***?'” says Pearl. “My insurance is paying for this?“