Overnight one day in the not-too-distant future, more than 100 AI agents will survey the entire market, process every earnings announcement from the past 24 hours, generate trading strategies, back-test those strategies, and run millions of simulations to stress-test them across likely risk scenarios.

The assets to be traded will include traditional securities and digital assets. With or without the click of a mouse button by a human overseer, an AI agent will execute the transactions across centralised exchanges, decentralised exchanges, and over-the-counter markets, all simultaneously.

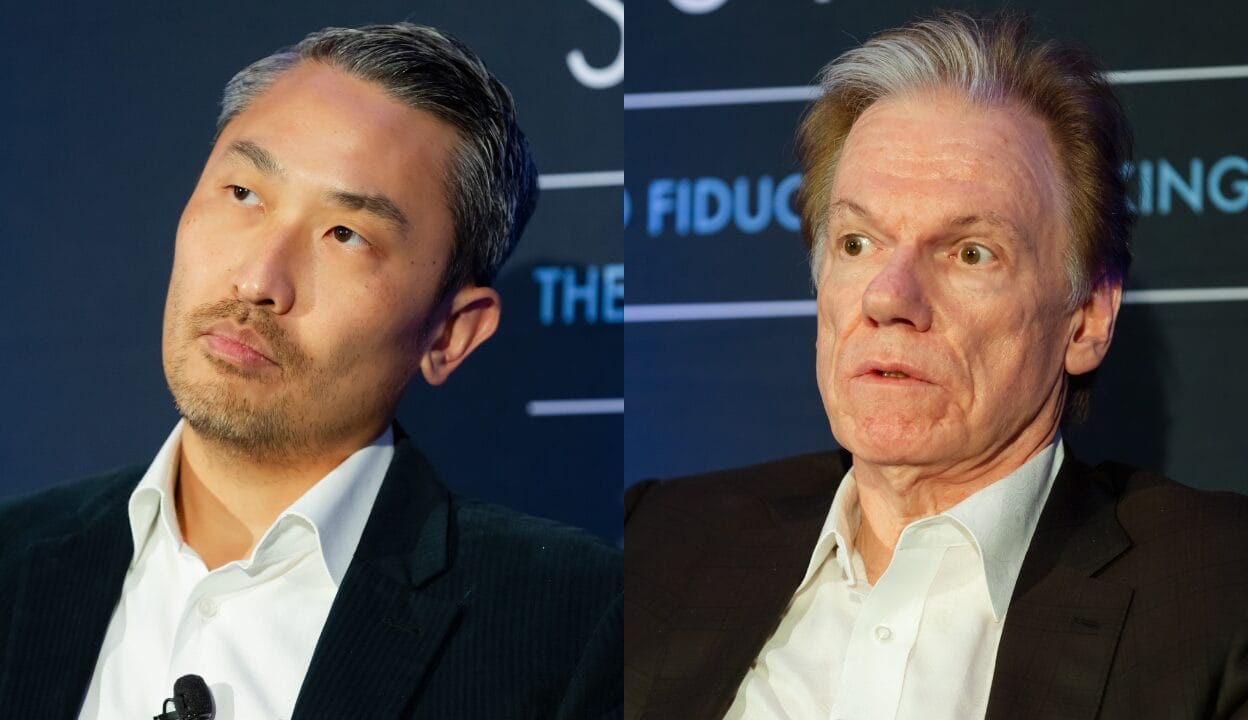

Will Cong, a professor and associate dean at Nanyang Business School and NTU College of Computing and Data Science in Singapore, told the Fiduciary Investors Symposium that this investing scenario is almost here.

“My sense is that by the end of this year, you already see agentic commerce, or more automated transactions, being done by AI agents,” Cong said. “That future has come.”

Cong said both the “on-chain economy” and the “agentic economy” will significantly affect how asset owners invest around the world.

But even as the role of AI in investing increases, responsibility for investment outcomes will not shift: ultimately, the human at the helm, typically the chief investment officer, will still be held accountable. So they’d better understand what their AI agents are doing, how they’re doing it, and why.

As traditional assets are increasingly tokenised so that they can be traded on-chain, the role of AI agents in identifying and executing profitable trades will grow.

“There is real-world asset tokenisation that’s very active. It’s still niche, based on the overall market cap, but in terms of variety, in terms of development, it has grown at least four or fivefold in the past year,” Cong said.

Cong said tokenisation is the representation of owning a real-world asset – including bonds, equities, infrastructure or units in a managed fund – as a digital token on a blockchain. That token can then be traded and settled on-chain, without the delays or costs associated with existing clearing and settlement systems.

Bridge to the on-chain economy

Tokenisation is therefore the bridge between real-world assets and the on-chain economy. Just as stablecoins bring traditional money on-chain, real-world asset tokenisation brings traditional assets on-chain. Once both money and assets exist in the same on-chain environment, AI agents can trade them autonomously, continuously, and across markets that were previously separate.

Cong said even private market assets may lend themselves to tokenisation, which would mean that they could be traded in real-time and autonomously, giving them a radically different liquidity profile and revolutionising pricing. It might eliminate the illiquidity premium typically associated with such assets.

But the point of tokenisation is that it opens the door to AI-agentic trading. Cong said AI has traditionally been applied to investment through modelling such as forecasting returns from asset-class characteristics, but generative modelling can optimise directly for arbitrary portfolio objectives.

“You give me any portfolio management objective, and the X variables are no longer the primitive predictors. They are the parameters of your trading strategy, the underlying weights of your asset combination,” Cong said.

A generative model can pursue complex, natural-language investment objectives, such as “maximise cumulative returns over 12 months, subject to never losing more than 50 per cent of assets under management, and subject to applicable tax rules in a given region”.

“That outcome is not in fundamental data, it’s not in market signals,” Cong said. “How do you optimise such an arbitrary objective directly? We couldn’t do that, but with generative modelling now we can.”

The buck stops with the CIO

There is always a risk that an AI agent may not chase the investment objective it was given in an expected or even an easily understood way. In these situations, especially if the outcomes are below expectations, there must still be accountability within the asset owner organisation, and the buck ultimately stops, just as it does now, with the CIO.

“How do we hold accountability when mistakes or bad things happen? I think there are, in general, two routes,” Cong said.

“One route is you create an ID for the AI agents, and they are punished directly. But the challenge is do they understand punishment? Is that fully aligned with us?

“The other one is, we still have humans that are strongly sort of bonded or paired with AI agents, and they take the residual cost. It’s just like a manager. It’s true that maybe my people I supervise make mistakes and all that, but I need to bear the residual responsibility, and that’s one structure that we are familiar with. We know how to economically design that structure. So in the immediate future, I would say that’s an easier route.”

Cong said that AI agents are not neutral instruments and his research found that machines carry systematic biases that are not always the same as human ones.

“For certain preference-based biases, they are human-like, but for belief-based biases, calculations of probabilities and so forth, they have their own mistakes.”

There are also cybersecurity issues to consider, for there is the risk that a bad actor could hack an agentic trading system and reprogram it to cause harm – that is, to deliberately lose money. On-chain trading environments mitigate this risk somewhat, because they are decentralised and openly auditable.

“When we do stress tests traditionally, we have to hire a professional team who knows programming and knows what back doors they leave,” he said.

“But now, if you have an environment where it’s openly auditable, you can already stress-test the environment.” He said distributed security monitoring across many participants may reduce vulnerability.

“Because we are in an on-chain environment that’s more decentralised, that’s more auditable, openly auditable, maybe the community watch is going to help this significantly.”